Redesigning the Cloud SDK + CLI for easier development

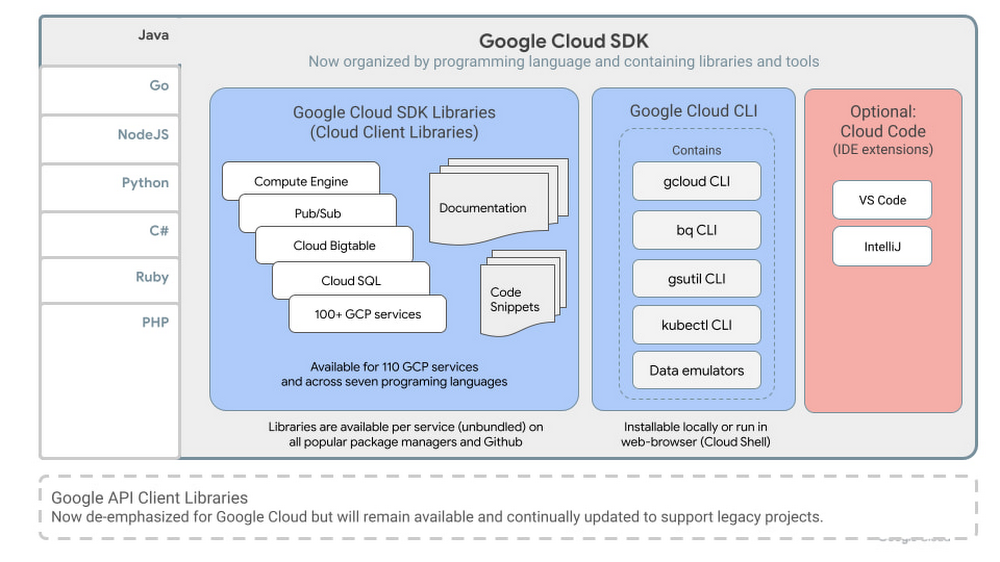

At Google Cloud they are constantly iterating on their tools to improve the developer experience and make it easier for you to interact with our technologies. To this end, Google are pleased to announce the rebranded Google Cloud SDK. Here’s how the SDK now looks:

Easing discovery by renaming our command line tools

Google have renamed their set of command line developer tools to Google Cloud CLI to make clear that this is the command line interface for working with any of our products and services. It continues to contain the same gcloud, gsutil, bq, kubectl, and various data emulators and other tools for local development. All existing scripts will continue to work since there are no command prompt related changes. While they have changed the installation package names, the former package names also work. For example, on Debian you can run apt-get install google-cloud-sdk or you can use the new apt-get install google-cloud-cli. The old package name will continue to be supported for as long as needed for customers to adjust to this change, but, for new scripts, they prefer you use the new package name.

Reducing coding effort with language-oriented SDKs containing their best client libraries

Google Cloud has long offered client libraries for all its services but over time they’ve also iteratively released a better style of client library called Cloud Client Libraries. Today, Google are pleased to announce that Cloud Client Libraries are generally available for the vast majority of Google Cloud services. These now become part of the Google Cloud SDK for each supported language. (Java, Go, NodeJS, Python, C#, Ruby, and PHP).

Cloud Client Libraries simplify integration with their APIs and provide several significant benefits over alternative libraries or raw service API calls:

- Lower learning curve:

By following the idiomatic style of your chosen programming language the client libraries feel more familiar and approachable so you can start coding effectively, faster. - Faster integrations with pagination, long running operations, and other helper methods:

Reduce repetitive boilerplate code by leveraging helper functions for common use cases like working with large datasets or dealing with asynchronous processes. - Easier integrations across multiple products:

By exposing consistent high-level patterns for authentication, setup, and configuration, you can work faster across multiple Google Cloud products. - More centralized and up-to-date documentation:

Automated documentation generation (available on cloud.google.com) gives you more consistent and accurate technical reference documentation, and a single go-to place to find them. - Code snippets:

View sample code snippets for every method* to help your development process.

*additional language support in the coming months

To simplify discovery, use, and your documentation experience, we’re de-emphasizing our historical libraries (Google API client libraries) and recommending Cloud Client Libraries for all new projects. For your legacy projects, Google will continue to support and update all previous-style Google API Client Libraries and their documentation as they remain widely used.

Bundling language-oriented frameworks, guides, and tools as available

By structuring our SDKs by programming language, Google will also now include in each SDK all language-specific frameworks and tools, as available, along with essential language agnostic tools.

For example,

Google Cloud SDK for Java

|

|–Cloud SDK Libraries (Cloud Client Libraries for Java)

|–Spring Cloud GCP (Spring Framework for Java on Google Cloud)

|–Google Cloud CLI

|–Use cases and guides (more coming)

|–Optional tooling (Cloud Code IDE extensions)

What’s next for the Google Cloud SDK?

Google will continue listening to your feedback (just click ‘feedback’ on any technical docs page) to help them define what an SDK should look like and contain in a cloud-first world. As always, their goal for the Google Cloud SDK is to help you do your best work on Google Cloud by accelerating your productivity, reducing your learning curve, and giving you easier access to the tools you need to do your best work on Google Cloud.

Infrastructure-as-Code (Iac) tools like Terraform are experiencing fast growing adoption by the developer community. This is no surprise because they greatly simplify the operational overhead associated with deploying and managing cloud infrastructure. In support of this growing trend, today Google are pleased to announce Google Cloud CLI’s preview release of Declarative Export for Terraform.

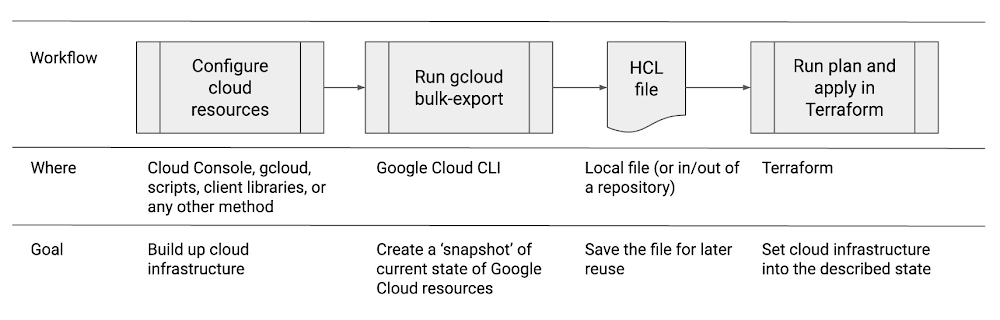

Declarative Export allows you to export the current state of your Google Cloud infrastructure into a descriptive file compatible with Terraform (HCL) or Google’s KRM declarative tooling. Here’s how it works and why this is useful to your day-to-day cloud development workflows.

What problem does this solve?

A common gap in IaC workflows on Google Cloud is how to actually create a HCL Terraform file describing all their Google Cloud resources and their states if one did not begin their cloud journey this way. It would be like trying to draw up the blueprints for the house after the house was already built, step by step, by people in the field without documentation of what happened or in what order.

Declarative Export makes it easier to migrate existing Google Cloud resources to Terraform. It also protects you from incorrect configurations and drift.

To export all resources in a folder to a local directory in Terraform format, run:

$ gcloud beta resource-config bulk-export --folder=12345 \--resource-format=terraform --path=/path/to/dir/

To generate a main.tf file in the current directory using the gcloud default values for zone, region and project run:

$ gcloud alpha resource-config terraform init-provider

This command will generate a main.tf file to configure Google Cloud Terraform Provider.

To generate an import script named import.sh and a module file named modules.tf based on the files exported to a local directory, run:

$ gcloud beta resource-config terraform generate-import \/path/to/dir/--output-script-file=import.sh --output-module-file=modules.tf

With Google Cloud CLI’s Declarative Export feature, you can use any tools of choice to ‘build up’ your cloud infrastructure – Cloud Console, the CLI, your existing gcloud or Ansible scripts, or any other method – and then use Declarative Export to create a Terraform compatible file describing the current state of every resource. Using the earlier house analogy, it is like being able to x-ray the already built home to reproduce the original plans used to construct it.

Benefits over imperative approaches:

Traditionally, developers and operators configure and manage their cloud infrastructure through a series of sequential or procedural steps. For example, the operator might first launch a virtual machine, then add a disk, some firewall and load-balancing rules, and so forth. As the complexity of cloud infrastructure grows, these imperative approaches become both more toilesome and error prone. If a step is done out of order, against the wrong resource, or with the wrong instruction, the infrastructure will not be configured correctly all the way through to the desired end state.

Compounding this complexity is that as development teams grow, so too does the likelihood that different people (or scripts) make changes to the same infrastructure. This drift over time can cause unexpected behaviors across the deployment and make it hard to track what changed much less how to revert back to a good known state.

How IaC helps:

The rise of declarative IaC tools enables developers to instead take a different approach: describe the desired target state of the infrastructure, and then let the IaC tools do the work to get the infrastructure into that state.

Terraform has, for several years, provided this type of IaC solution. Terraform uses a Hashicorp proprietary syntax (HCL) to describe the specific cloud resources and the desired end state. These files can be tweaked to depict desired alterations to the end state and then re-applied by Terraform to effectuate whatever is needed to get the infrastructure into the described state. The tooling itself determines what specific invocations need to be made to get to the goal. This frees the operator from having to manage the many sub-steps and their order.

Additionally, expressing the state of infrastructure as a text file means this file can be checked-in to a repository to gain all the benefits of repository management: diffs, conflict resolution, commit notes, timestamps, and so forth. Now a developer can select a known revision from their history and ‘snap’ to it by applying that specific HCL file using Terraform.

Primary use cases for Declarative Export

The HCL file can be used to run a plan and apply in Terraform. This will put the infrastructure into the described target state.

Google anticipate declarative export being used as follows:

- Resolving infrastructure drift: over time, and especially when multiple people are involved in configuring infrastructure on behalf of an organization, the infrastructure will stray from a known accurate and correct original state. By using Google Cloud CLI’s declarative export to capture the good known state before drift occurs, the operator can later leverage Terraform to get the drifted infrastructure back into the good known state.

- Cloning an environment: complex stacks may take hours or days to properly configure. Rather than redoing all the steps manually, or spending effort to script the steps, you can instead simply export the declarative depiction of the entire environment and then use Terraform to create clones. Example use cases would be to clone to spawn a staging environment or to clone a stack into another region. In these cases you would apply minor tweaks to the HCL file such as changing the zone or region before reapplying it.

- Deploying templatized software stacks: You are a company with pre-defined stacks you either sell as a SaaS product or use for internally approved application instances. You may use the tools of choice to set up the ‘perfect’ Google Cloud infrastructure configuration and then recreate multi-resource instances on demand.

- Troubleshooting and / or reverting in case of emergencies: if you can ascertain when a problem started, you can diff the associated and previous HCL files to see what precise changes might be at cause. Or, you can apply a known to be good HCL to get back into a working state to de-escalate the emergency situation and allow more organized remediation.

- Using Declarative tools for day-to-day workflows: you may find yourself more productive editing HCL files as compared to using the Cloud Console or CLI to effectuate changes across your infrastructure.

Current Limitations with this preview release:

- Many but not all Google Cloud resources are covered. To list all exportable resource types in project my-project in json format, run:

$ gcloud beta resource-config list-resource-types --format=json \--project=my-project

- Manual apply in Terraform. You will simultaneously use gcloud + terraform tools to ‘import’, apply, and run the Terraform file.

What’s next

In the future, these steps will be simplified directly from within the gcloud CLI using gcloud beta resource-config terraform generate-import or similar. Google will also continue to add support for additional resources.